88% of AI pilots fail to reach production. It's almost never the AI's fault. Here's the structural breakdown that kills most initiatives and what the 12% that succeed do differently.

For every 33 AI pilots that launch, roughly 4 make it to real production.

That's not a model problem. That's a structure problem.

If you've run an AI initiative that went nowhere (a tool rollout that fizzled, an agency engagement that produced a demo and then silence, an internal experiment that never became a workflow), this post is the postmortem you didn't get.

The number that reframes everything

88% of AI pilots fail to reach production. That stat came out of a 2026 CIO survey, and it's been bouncing around boardrooms ever since. But the number that matters more is this: 95% of AI initiatives fail to deliver expected business outcomes.

Think about that for a second. Not 30%. Not half. Nineteen out of twenty.

The AI market is not short on tools, investment, or ambition. It is producing failure at industrial scale. And almost none of that failure is because the AI didn't work.

The demos work. The pilots work. It's everything after that falls apart.

What failure actually looks like

When people imagine AI project failure, they picture a system crash. A chatbot meltdown. A public embarrassment. Some catastrophic output that triggers a rollback.

That's not what happens.

What actually happens is nothing. The pilot ends. The agency sends a final report. The tool sits in a Notion doc titled "AI Initiatives Q3." Someone meant to pick it up again. Nobody did. Life continued as before.

It doesn't feel like failure. It feels like delay. Then one day you realise it's been eight months and nothing changed and you're not sure who to ask about it.

That's the dominant AI failure mode in 2026 for small and mid-sized businesses. Not a crash. A slow fade. A pilot that was never designed to graduate into anything.

The reason it happens isn't laziness or bad intent. It's structural. The same five structural problems show up again and again.

The five reasons pilots die

1. No success metric before launch

73% of failed AI pilots had no clearly defined success metric before they started. Not before they finished. Before they started.

That sounds like an obvious mistake. It isn't obvious when you're in it. The pressure is to move, to show progress, to get something running. Defining success feels like admin. It can wait.

It can't wait. Without a baseline and a target, you can't tell whether the pilot worked. You end the engagement with vibes. The agency says it went well. Your team says it was useful. Nobody can say by how much, compared to what, or whether to continue.

The fix is simple but requires discipline: before anything gets built, write down what success looks like at 30, 60, and 90 days. A specific number. A specific workflow metric. Something you can measure without asking the agency.

If you can't define it, you're not ready to start.

2. Demo data isn't production data

This one kills technically successful pilots.

The demo runs on a cleaned spreadsheet, a curated dataset, a controlled input. It's impressive. The output is exactly what you wanted. Everyone's excited.

Then someone tries to connect it to the actual data (messy CRM records, inconsistent naming conventions, five years of legacy formatting, documents that live in three different systems with different access permissions) and the whole thing grinds to a halt.

Data readiness is the single biggest technical dealbreaker between a pilot and production. It's not glamorous, so nobody leads with it. Agencies don't raise it because it delays the start. Internal teams don't raise it because it sounds like an obstacle.

It is an obstacle. Raise it before the first line of automation gets written.

If you don't know the state of your data, spend a week finding out before you commission a build. What you learn will either speed the project up or save you from a very expensive dead end.

3. No internal owner when the agency leaves

Agencies build things. Then they leave. That's the model.

What often doesn't exist is a person inside your business who owns what they built. Someone who understands how it works, knows what to do when it breaks, and has the authority to iterate and improve it.

Without that person, the workflow is on life support from day one. Every question goes back to the agency. Every small tweak costs a change request. When the agency's main contact moves on, the institutional knowledge walks out with them.

Projects without an internal owner fail at three times the rate of those that have one. The owner doesn't need to be technical. They need to care about the outcome, have time allocated to it, and have the authority to make decisions.

Name that person before the engagement starts. If you can't name them, you're not ready.

4. Scope too broad

"We want an AI assistant that can handle customer queries, summarise internal documents, help with marketing copy, and flag anomalies in our reporting."

That is four projects. Presented as one. With a single budget and a single timeline.

Broad scope is how pilots get ambitious on paper and produce nothing in practice. The more use cases you try to cover, the less depth you get on any of them. The result is a system that does everything adequately and nothing well enough to change how your business actually operates.

The businesses that succeed with AI in 2026 are the ones that go narrow first. One workflow. One integration. One defined outcome. Prove it works, measure it, then expand.

An AI that reliably does one specific thing is worth more than an AI assistant that can theoretically do twenty things but gets used for none of them.

If your scope covers more than one workflow, cut it. You can always add later.

5. Executive sponsorship evaporates by month three

56% of failed AI pilots lost executive sponsorship within the first six months. Projects without it fail at three times the rate of those that maintain it.

Here's how it goes. The CEO is excited about AI. They green-light the pilot. Then quarterly reviews come around, a bigger priority lands, the pilot is "tracking well" so it doesn't need attention, and within a few months it's running without anyone senior paying attention to it.

When a roadblock hits (and one always does), there's nobody with authority to clear it. The project stalls. The team loses momentum. The agency keeps billing. Nothing moves.

Executive sponsorship doesn't mean the CEO needs to attend every meeting. It means someone at a senior level has this on their dashboard, reviews it monthly, and will make decisions when decisions are needed.

If that person doesn't exist, the pilot is running without a safety net.

What the 12% do differently

For every 33 pilots that launch, four graduate to production. Here's what those four have in common.

They started narrow and defined. One workflow, one owner, one metric. Not a vision for AI transformation. A specific, bounded experiment with a clear pass/fail condition.

They treated data as a precondition, not a detail. Before the agency scoped the build, they audited what data existed, where it lived, and whether it was clean enough to run on. They found the gaps early.

They built for handoff from day one. The agency was instructed to document everything, build on tools the client controls, and run training sessions before the engagement closed. The internal owner was in the room throughout, not just at the start and end.

They maintained a sponsor who reviewed progress against the defined metric every month. Not a status update. An actual number compared to the baseline.

And when the pilot worked (when the metric moved), they had a plan for what came next, rather than celebrating and moving on.

None of this is sophisticated. It's discipline applied to a stage of work that most businesses treat as informal.

Five questions to answer before the first meeting

If you're about to start an AI initiative (with an agency, with a tool, or internally), answer these before anything else.

1. What specific workflow are we automating, and what does it look like today? Not "we want to improve customer service." The exact process, the steps, the inputs, the outputs, the person who currently does it.

2. What does success look like at 90 days, and how will we measure it? A number. A baseline. A target. If you can't write this down, you can't evaluate whether it worked.

3. Who is the internal owner, and what time do they have allocated to this? A name. Not a team. Not "the ops function." One person with authority and time.

4. What data does this depend on, and is it accessible and clean? Where does it live? Who owns it? When was it last audited? What happens when it's missing or inconsistent?

5. Who is the senior sponsor, and how often will they review progress? Not "leadership is supportive." A specific person with a specific review cadence.

If any of these are unanswered, you're not ready to start. Get them answered first. The two hours it takes will save months of expensive inertia.

Start with a blueprint, not a blank slate

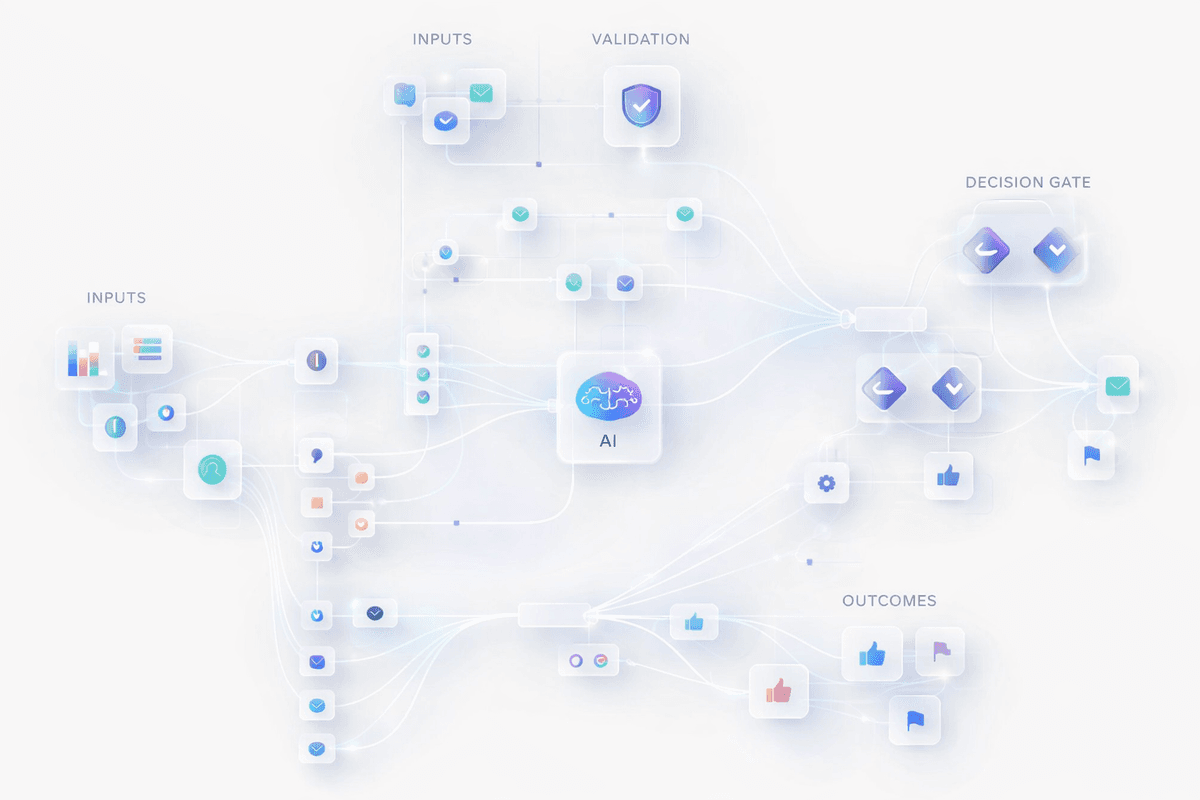

The structural problems above are solvable. What most businesses lack isn't capability. It's a starting framework that forces these questions before the work begins.

Our Blueprints are built for this. Pre-mapped AI workflow structures for specific business functions. Starting points that already have the scope, the owner structure, and the success metrics baked in. You're not designing from scratch. You're adapting something that's already been proven to graduate from pilot to production.

And if you're at the point of finding a partner to build with, Find a Provider connects you with implementation partners who have demonstrated real production deployments. Not just polished decks.

The AI isn't the problem. It almost never is.

The structure around it is. Fix that first, and the numbers change.