No AI agency is going to tell you you're not ready. Their incentive is to start the engagement, not disqualify the lead. Here are the five readiness checks to complete before any external conversation.

No AI agency is going to tell you you're not ready.

Think about why for a second. Their revenue starts when the engagement starts. Telling you to slow down, audit your data first, build internal clarity before anything gets built. That's commercial suicide for them. It delays the invoice. It might cost them the deal. Some agencies genuinely believe they'll figure it out once they're inside. Some are right.

But when they're wrong, the cost lands entirely on your side of the table.

This isn't a hit piece on AI agencies. Most are trying to do good work in a market that moves fast and rewards confident pitching over hard questions. The problem is structural. The incentive to qualify you out doesn't exist in their business model. So almost nobody does it.

That means the qualifying has to be yours.

What the numbers actually look like

80% of AI projects fail to deliver their intended business value. That's not a fringe estimate. It comes from RAND Corporation's 2025 analysis, consistent with what Gartner, McKinsey, and BCG have been reporting for years.

Deloitte found that 42% of companies abandoned at least one AI initiative in 2025. The average sunk cost per abandoned initiative: $7.2 million. For a large enterprise, that's painful but survivable. For an SMB, it can be a company-defining mistake. When an AI project fails at a small business, there is rarely budget for a second attempt.

MIT's State of AI in Business 2025 found that 95% of enterprise AI initiatives deliver zero measurable ROI. Not partial ROI. Zero.

Here's where it lands: Gartner found that data quality and readiness issues appeared in 43% of failed AI projects. 76% of SMBs cited insufficient internal knowledge about AI requirements as a major challenge. And organisations that run formal readiness assessments before beginning implementation see success rates jump from 14% to 47%.

The failure almost always starts before the agency arrives.

What "not ready" actually looks like

It doesn't look like obvious chaos. Businesses that aren't ready for AI implementation don't look like disaster zones from the outside. They look like normal, busy companies that have decided it's time to move.

Here's what it actually looks like.

The brief going into the agency is vague. "We want to use AI to improve our operations." The agency nods. They'll help you define it in discovery. Discovery takes six weeks. The scope that comes out of it is ambitious and expensive. You feel behind, so you say yes.

The data the build depends on sits across three systems, maintained by different people, with no consistent formatting. This surfaces in week four of a twelve-week engagement. The agency says they can work around it. The workaround adds eight weeks and significant cost.

The person who was supposed to own the workflow internally leaves three months in. Nobody has the context to continue. The agency keeps billing. Nobody can make decisions. The project quietly stalls.

In March 2026, the FTC banned Air AI from marketing business opportunities after finding the company had misled entrepreneurs and small businesses with false claims about what their AI could deliver. The agency promised. Businesses paid. The product didn't match the pitch.

That's the extreme version. The mundane version is more common: an agency that tried, a business that wasn't ready, and six figures that turned into a workflow nobody uses.

The five things to do first

These aren't optional. They're the structural difference between the 14% that succeed and the 86% that don't.

1. Define the problem in one sentence

Not "we want to use AI to improve our operations." One specific sentence: we want to automate the process of [specific task] so that [specific person] can stop spending [specific time] on it.

If you can't write that sentence without someone's help, you're not ready to commission a build. You're ready to have a conversation. Those are different things, and good agencies will charge you for both.

The sentence needs to be specific enough that you could hand it to someone who doesn't know your business and they'd know exactly what gets built and what it's supposed to do.

2. Know the state of your data

This is the readiness check that kills the most engagements, and the one most businesses skip because it's uncomfortable to look at.

AI-ready data requires consistent formatting, documented schemas, less than 5% missing values, clear ownership, and a continuous pipeline that doesn't depend on someone manually exporting a spreadsheet every Friday.

Before you talk to any agency: find out where the data this build will depend on actually lives. Who owns it. When it was last cleaned. What happens when the person who maintains it is unavailable.

Internal teams consistently overestimate data readiness because they know the workarounds. They know which fields to ignore, which records to skip, which exports to treat as approximate. An AI system won't know any of that. It will fail exactly where humans had been quietly compensating for years.

3. Name the internal owner

This person exists before the agency starts work. Not during. Before.

They don't need to be technical. They need to care about the outcome, have authority to make decisions, have time genuinely allocated to this project (not "they'll figure it out alongside their normal role"), and plan to stay at the company through and past go-live.

Research consistently shows that sustained executive sponsorship produces a 68% project success rate. When sponsorship lapses, that number drops to 11%.

The agency can build something excellent. If nobody inside the business understands how to run it, adjust it, or advocate for it when something breaks, it will quietly die. If you can't name the internal owner today, you're not ready to start tomorrow.

4. Define success before anyone starts building

A specific metric. A baseline. A target. A timeframe.

Not "we'll know it's working when things feel better." Something you can measure without asking the agency whether it worked.

73% of failed AI pilots had no defined success metric before launch. When success is undefined, the agency can always argue it succeeded. You have no basis to disagree. You end the engagement with differing interpretations and no data to resolve them.

Write it down before the statement of work gets signed. If the agency resists committing to a measurable outcome, that tells you something.

5. Budget for what comes after the build

This is the number nobody mentions in the sales process.

The build is one cost. Running the build is another. AI workflows depend on model APIs (OpenAI, Anthropic, others) that charge per use. Those costs are not included in the agency fee. At low volume they're negligible. At real business volume, they compound fast.

Then there's maintenance. Models change. APIs update. Prompts that worked in January break in March. Someone has to manage that. Either you pay the agency to stay on retainer, you hire someone who can, or you watch the workflow degrade until it stops working.

Ask any agency you're evaluating: what does this cost to run at our expected volume? What does maintenance look like in year two? If the answers are vague, they are scoping a build, not a solution.

If you fail one of these checks

Don't stop. Don't wait for perfection before starting anything.

Do the work that gets you ready first. That might mean two months of data cleanup. It might mean hiring an internal operator before you bring in an agency. It might mean getting clear on the problem before paying someone else to find it for you.

The agencies worth working with will respect this. Some will help you get ready as a scoped piece of work before the main engagement. That is what a partner does. An agency that pushes back on your decision to take time before committing is optimising for their pipeline, not your outcome.

The short version of everything above: if you can't clearly answer what gets built, what data it runs on, who owns it, what success looks like, and what it costs to run, you're not ready. Get those answers first.

Everything after that gets faster, cheaper, and more likely to actually work.

When you are ready, the conversation changes completely

You know what you're building. You know your data. You have an owner. You have a metric. You have a budget that accounts for the full lifecycle. At that point you're evaluating fit, not fundamentals: track record, specific experience with your type of workflow, whether they build things you can own or things that keep you dependent.

If you're still mapping out what AI should do in your business before bringing anyone in, this blueprint helps you understand where AI can benefit your business. So you're not paying an agency to help you figure out what you need, which is the most expensive way to do it.

The agencies aren't the problem. The readiness gap is.

And it's yours to close, because nobody else has any incentive to close it for you.

Want to Read More

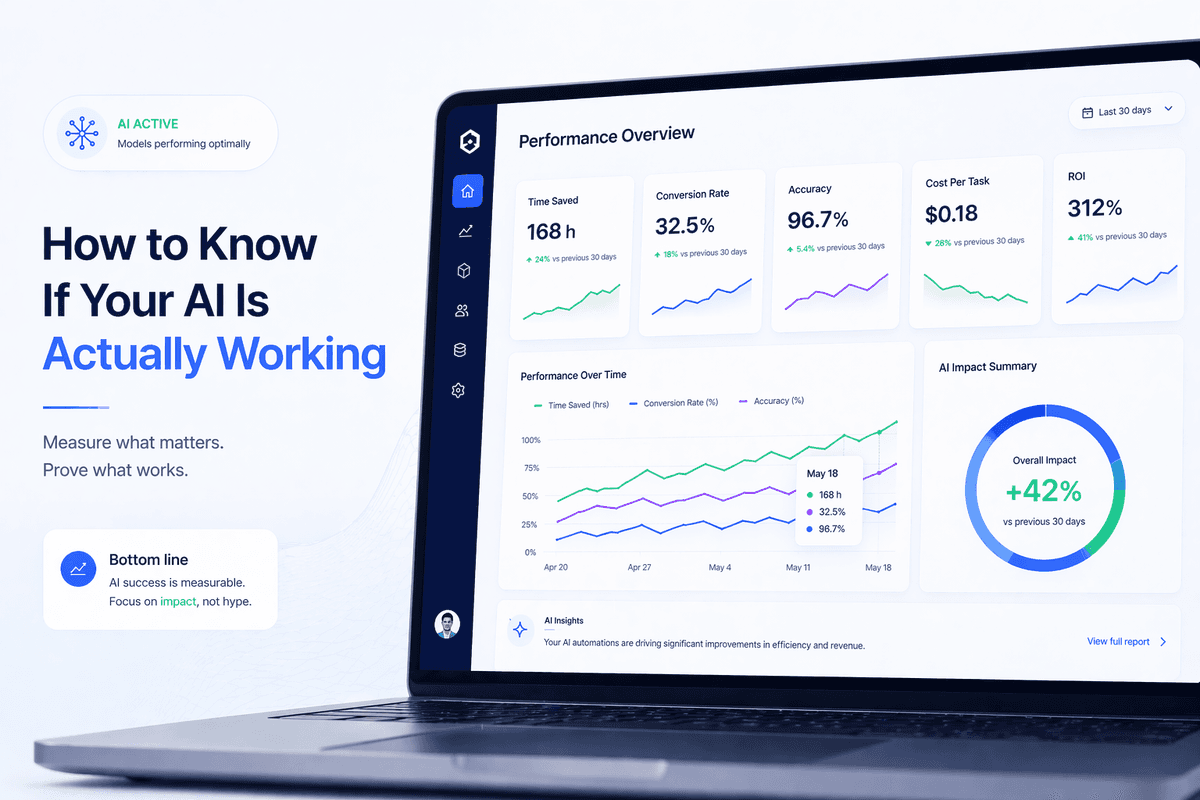

How to Know If Your AI Is Actually Working

95% of enterprise AI pilots delivered no measurable impact. Most small businesses can't answer whether their AI is working either. The problem isn't the tools, it's that nobody set a baseline before they started.