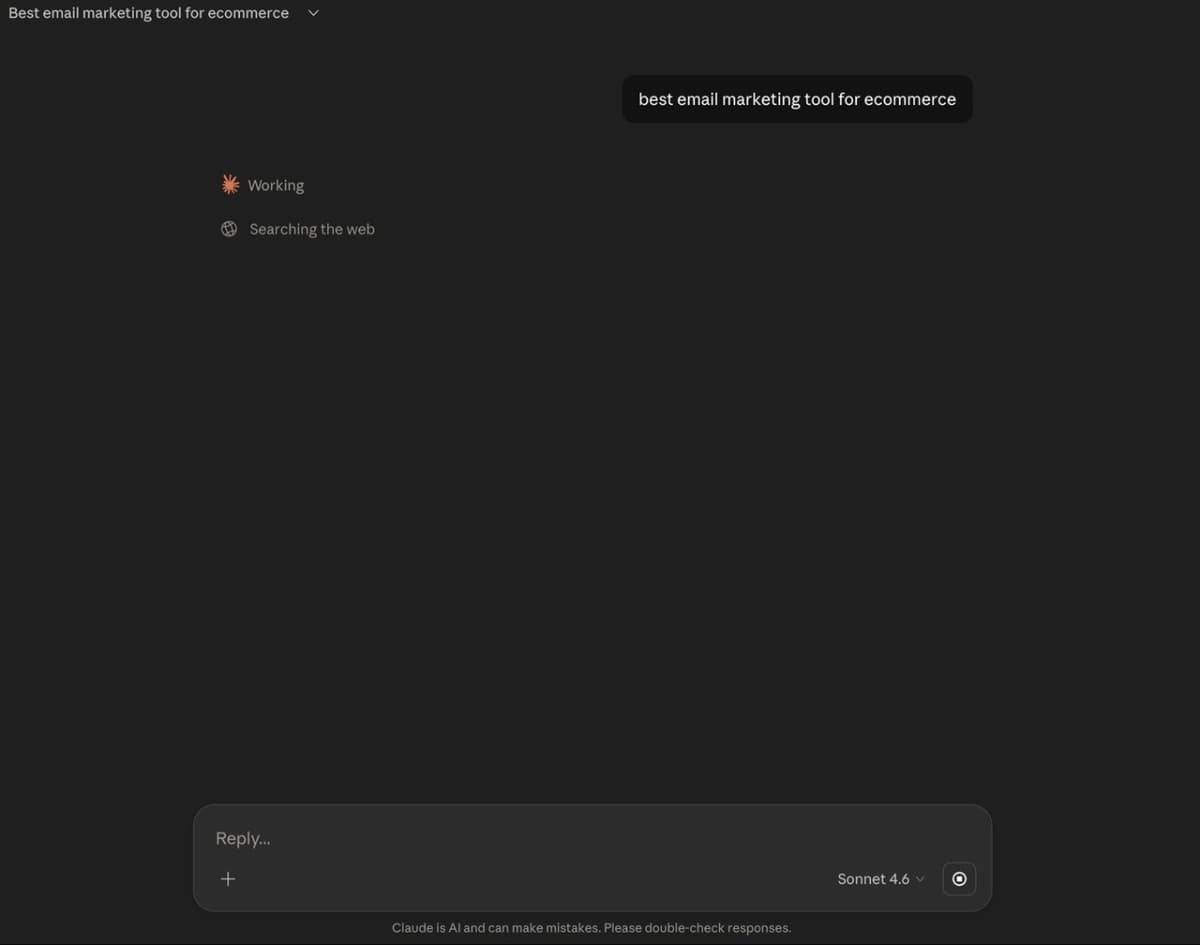

95% of enterprise AI pilots delivered no measurable impact. Most small businesses can't answer whether their AI is working either. The problem isn't the tools, it's that nobody set a baseline before they started.

Someone asks you what AI has done for your business this year.

Maybe it's an investor. Maybe it's your business partner. Maybe it's your own accountant, looking at a line of monthly subscriptions and raising an eyebrow. Doesn't matter who it is. The question lands and you answer it. You talk about time saved. Content moving faster. The team not having to do the repetitive stuff anymore. It sounds right. It feels right.

But there's no number in it. No before. No after. Just a general shape of improvement that you believe is true and cannot prove.

Most founders using AI tools right now are in exactly this position. That's not a criticism. It's a description of how AI got adopted: fast, tool by tool, on instinct, because something seemed useful and stayed. The problem isn't that you're using AI. The problem is you never took a picture of what things looked like before you started.

The data makes it harder to dismiss as a personal failing.

MIT's The GenAI Divide (published in 2025, based on 300 public AI deployments and surveys of 153 business leaders) found that 95% of enterprise AI pilots delivered no measurable P&L impact within six months. Not a niche finding. Not a fluke. A consistent pattern across industries and company sizes.

A separate RGP survey of 200 U.S. finance chiefs found that only 14% have seen clear, measurable impact from their AI investments to date. These are CFOs. People whose job is to track where money goes and what comes back.

Only 19% of businesses track AI-specific KPIs at all, according to Digital Applied's 2026 content marketing research. Most organisations are measuring whether their AI is being used. Almost none are measuring whether it's working.

This isn't an enterprise problem that doesn't apply to you because your business is smaller and more agile. It's a measurement problem. And smaller businesses are more exposed, not less, because there are fewer people whose job it is to ask the uncomfortable questions.

The Real Problem: You Never Set a Baseline

AI tools got adopted the same way most software gets adopted. Someone tried it, it felt useful, it stuck. The subscription went on the card. The tool became part of the routine. Nobody stopped to record what the routine looked like before.

That's the baseline problem. And it's almost always the root cause when a business can't answer the question "is this working."

You cannot measure improvement without knowing where you started. A baseline is just that: a number that represents normal before the thing you changed. Without it, you're comparing your current situation to a feeling you had about your previous situation. That's not measurement. That's memory, and memory is optimistic.

Every meaningful claim about AI working (time saved, output up, costs down) requires a before number to be real. Most businesses don't have one. So when the question comes, the answer is vague. Not because the AI isn't helping. Because there's nothing concrete to compare it to.

What to Actually Measure

Not fifteen metrics. Three categories. Pick one measure from each that's relevant to the tool you're evaluating.

Time. How long did this specific task take before the tool? How long does it take now? Hours per week is a real number. "Faster" is not. If a writing tool has been running for six months, you should be able to say: drafting a proposal used to take three hours, now it takes forty-five minutes. That's a baseline and an outcome. That's a result.

A five-person operations consultancy uses an AI drafting tool for client status reports. Before the tool, a consultant spent three hours every Friday pulling meeting notes, structuring the update, and writing the summary. After six weeks: forty-five minutes. Same quality, checked and edited before it goes out. That's two hours and fifteen minutes recovered per person per week. At their internal rate of $90 an hour, that's roughly $200 a week, per consultant, from one use case. The number existed because someone wrote down "three hours" before the tool went live. Without that number, the answer to "is it working?" would have been "yeah, feels faster." Feels faster is not a number.

Output quality or volume. Did the number of things produced go up? Did error rates go down? Did customer response time change? Proposals sent, support tickets resolved, emails answered per day, pick the one that maps to what the tool actually does. One metric per tool. More than one and you'll measure nothing properly.

Revenue or cost line. This is the hardest category and the most important. Does this tool touch something with a dollar value attached? Deals closed, cost per acquisition, support cost per ticket, ad spend efficiency? If yes, track it. If the tool doesn't connect to any revenue or cost line after six months, you're operating on faith. Name it clearly so you can make a real decision about whether to keep paying for it.

Most AI tools won't produce a clean revenue number. Time and quality are legitimate measures on their own. But if six months of use can't demonstrate anything in any of the three categories, that's not a measurement failure. That's an answer.

The 30-Day Fix

Pick one AI tool. The one with the biggest question mark over it.

Before next week, record three numbers: how long the relevant task takes right now, how often it gets done, and what it costs in person-hours at your team's approximate hourly rate. Write them down somewhere you'll find them. That's your baseline.

In thirty days, measure the same three numbers again.

That is the entire method. It takes twenty minutes to set up. The only hard part is doing it before you forget you never did it.

For every new AI tool from this point forward: five minutes of baseline recording before it goes live. Time, volume, cost. Current state, captured. That's the habit that makes the question answerable.

How to Make the Cut or Keep Decision

Three months of active use. No measurable movement in time, quality, or cost. Cut it.

Not pause it to revisit. Not put it on a list. Cut it. The sunk cost is already gone. The ongoing subscription is the only part of this you still control.

If a tool is clearly saving time but you can't connect it to revenue, keep it for now. Time has value. But find the revenue connection within the next quarter, or accept that this tool has a ceiling on what it can justify and price it accordingly.

If a tool has a measurable impact on a revenue or cost line, that's where you invest more. More usage, more training for the team, more integration into the workflow. That's where the real return lives.

The Kyndryl 2025 Readiness Report found that 61% of senior business leaders now feel more pressure to prove AI ROI than they did a year ago. That pressure is only going to increase. The founders who can answer the question cleanly, with a number, a before, and an after, will be in a different position to those who can't.

Setting a baseline is not a vote of no confidence in AI. It's not a sign you're sceptical or slow to adopt. It's what turns a tool you believe in into a tool you can defend.

The question "is this working?" is not a threat to using AI. It's what makes using AI real.

Want to Read More

Should I hire a Human or AI in 2026?

A $60,000 hire costs $75,000–$102,000 fully loaded. A workflow doing the same job costs $50–$500 a month. But the comparison only applies to certain roles. Here are the five questions that tell you which one you're looking at.